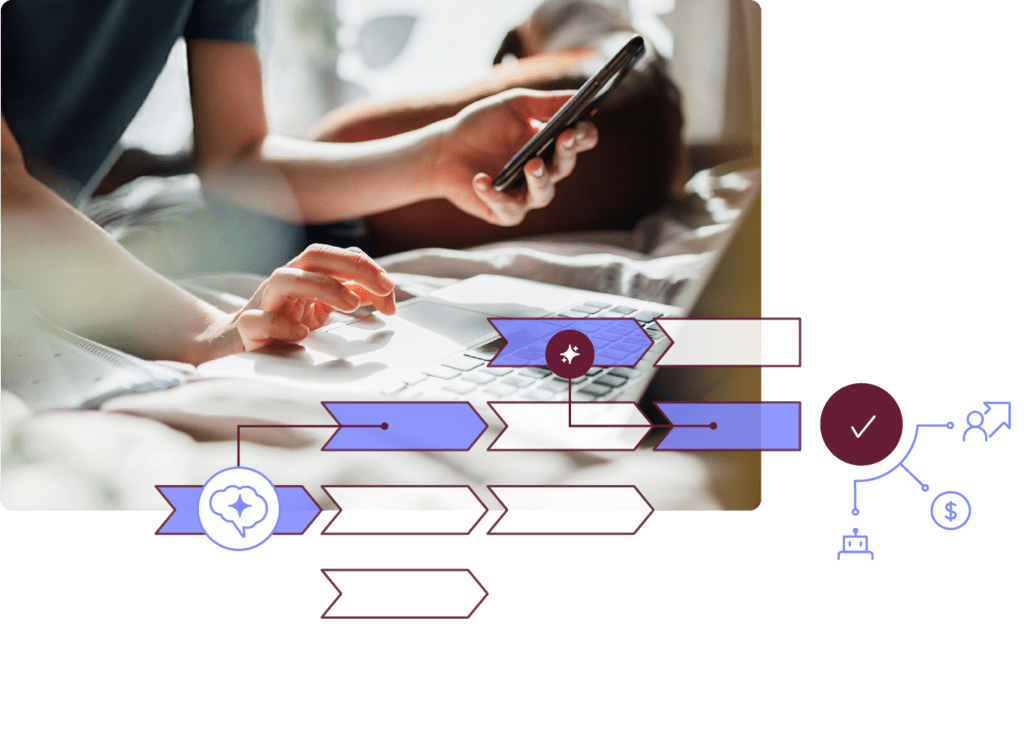

Tech debt has met its match

Reimagine your workflows and rewrite the rules of transformation with the full power of enterprise AI.

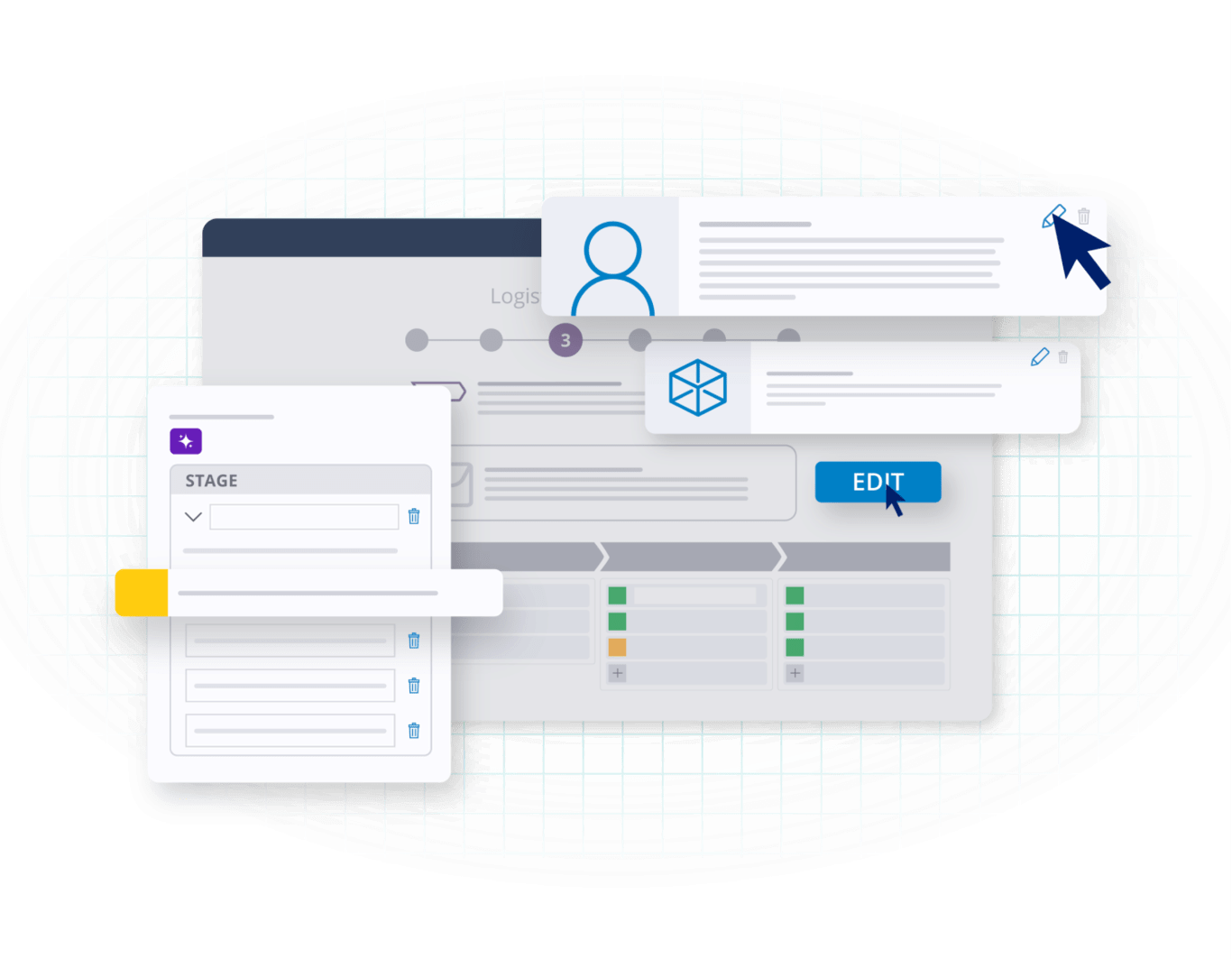

Take our Design Agents for a spin

Transform how you innovate with Pega Blueprint. Go from idea to app, or strategy to marketing rollout, in a flash

Enterprise solutions, at the ready

Pega is architected to run your most critical journeys. Explore our key solutions:

Enterprise solutions hand-picked for you

Tell us your specific challenges. We’ll recommend the best tech to help you achieve incredible outcomes.

Great Eastern retires legacy systems and redefines service

With Pega, Great Eastern replaced outdated workflows with intelligent automation – boosting efficiency, reducing costs, and elevating every customer interaction.

Recommended for you

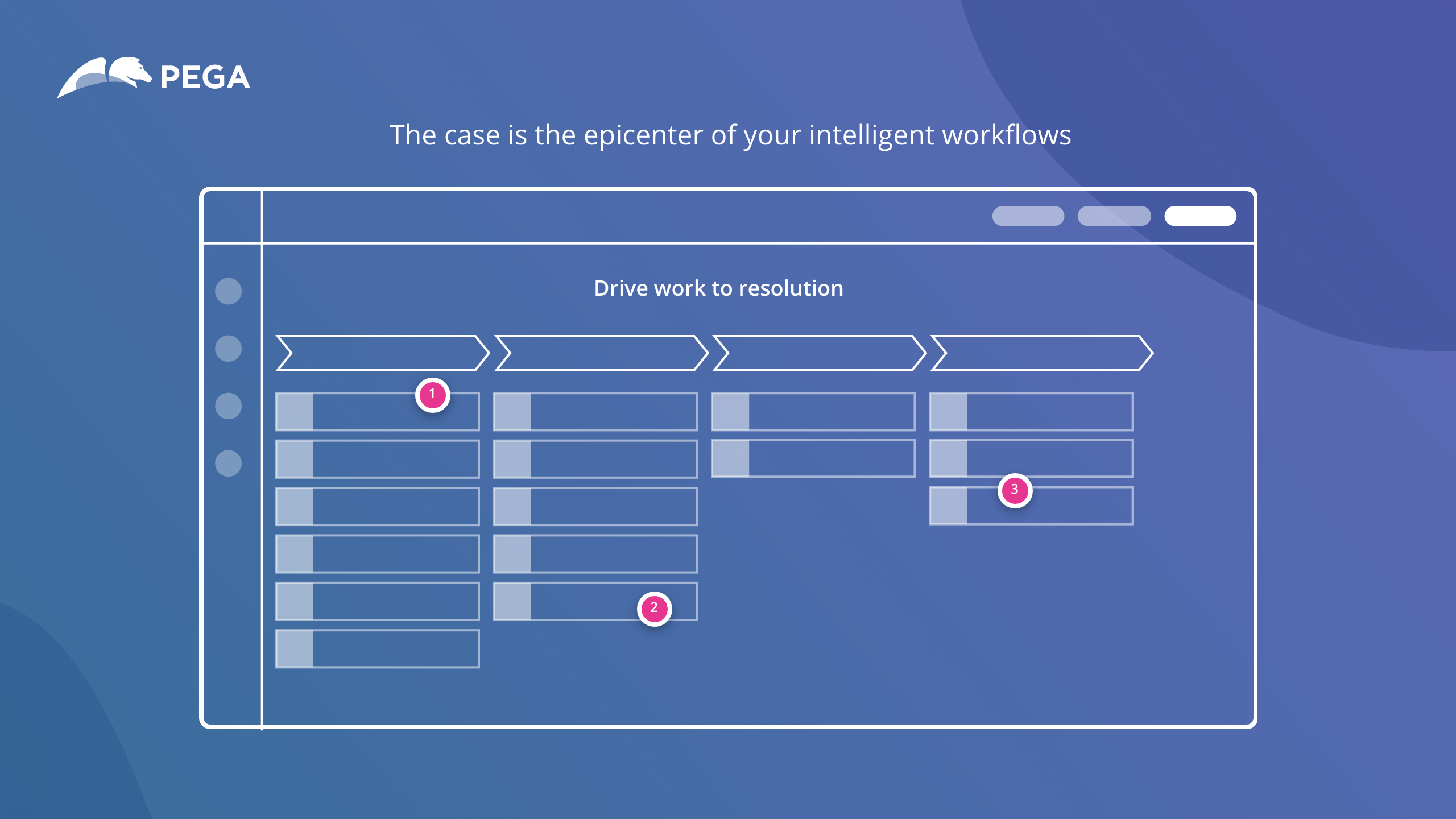

Learn why we’re

The enterprise transformation company™

Unlock future-defining outcomes on the only platform with enterprise evolution built right in.