Use AI responsibly

Mitigate risks and promote trust

What is responsible AI?

Responsible AI means developing or using artificial intelligence in a way that is ethical, transparent, fair, and accountable – to ensure it’s both safe for society and consistent with human values.

Why is responsible AI important?

Using AI responsibly is critical to ensuring consumer privacy, avoiding discrimination, and preventing harm. Violating consumer trust can damage a brand’s reputation, go against regulatory requirements, and have negative impacts on society.

Benefits of responsible AI

- Build trust with users and stakeholders

People – especially consumers – are more likely to adopt and interact positively with AI systems they trust to be fair, transparent, and ethical. - Drive inclusive and fair outcomes

Prioritizing fairness and inclusivity ensures that AI systems serve diverse populations without bias. - Stay ahead of regulatory requirements

As regulatory bodies introduce more regulations governing AI, prioritizing responsible AI ensures compliance with these evolving legal frameworks, helping to avoid fines and legal issues. - Manage risk

Identify and mitigate risks early, including ethical risks, reputational risks, and potential legal liabilities, particularly in areas subject to regulation. - Make better AI-powered decisions

AI systems designed with responsibility in mind often lead to better decision-making. They are more likely to consider a wider range of factors and implications.

How does responsible AI work?

Responsible AI systems are fair, transparent, empathetic, and robust. For AI to be considered responsible, its decision-making process needs to be explainable, hardened to real-world exposure, and behave in a way that aligns to human norms.

The AI Manifesto

Discover best practices, perspectives, and inspiration to help you build AI into your business.

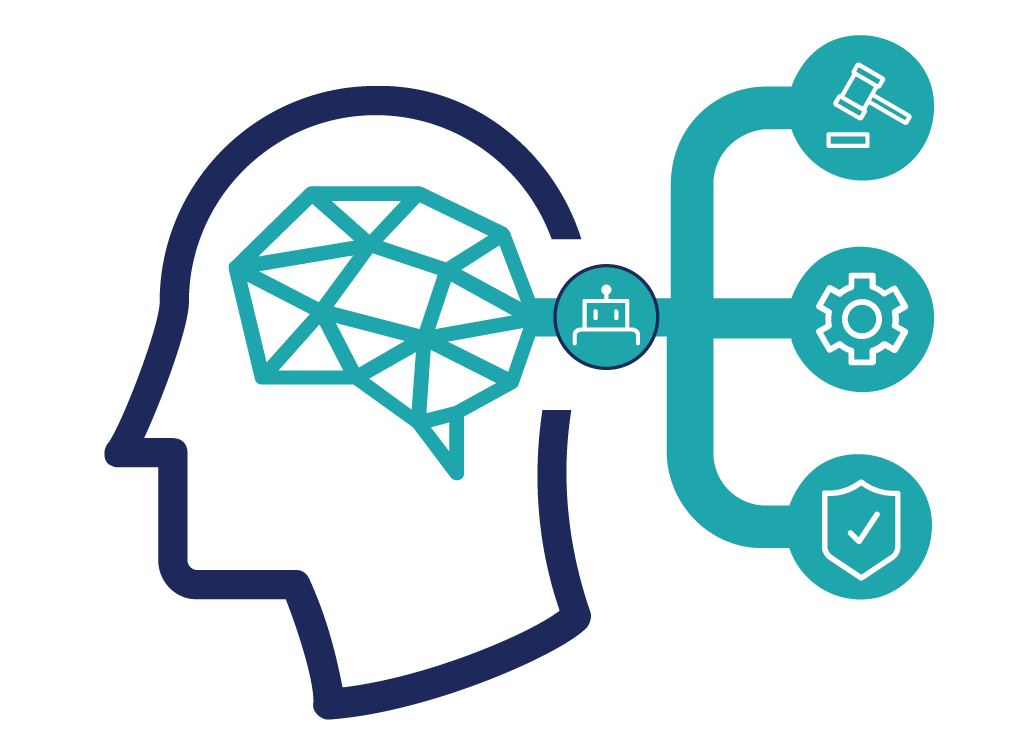

What are the core principles of responsible AI?

Fairness

Artificial intelligence must be unbiased and balanced for all groups.

Transparency

AI-powered decisions must be explainable to a human audience.

Empathy

Empathy means that the AI adheres to social norms and isn’t used in way that’s unethical.

Robustness

AI should be hardened to the real world and exposed to a variety of training data, scenarios, inputs, and conditions.

Accountability

Accountability in AI is driven by organizational culture. Everyone across departments and functional areas must hold themselves and their AI to a high standard.

What are some potential AI risks?

Risks associated with opaque AI are amplifying discrimination and bias, driving negative feedback loops that reinforce misinformation based on inaccurate data, eroding consumer trust, and stifling innovation.

How to prepare for and prevent AI risks

- Oversight and testing

AI relies on dozens, hundreds, or even thousands of models, so achieving fair outcomes requires testing them frequently and always having human oversight. - Data accuracy and cleanliness

Historical and training data must be high quality, diverse, bias-free, and representative of the actual population. - Ethical design

The intent of the algorithm design and outcomes the organization desires must be ethical and comply with social norms, regulations, and the organization’s values.

Emerging trends: The rise of agentic AI

As AI capabilities continue to advance, we’re entering an era where systems can act with increasing autonomy. Known as agentic AI, these systems go beyond passive predictions—they can pursue goals, make decisions, and take actions on their own, often in dynamic environments. While this unlocks powerful new possibilities, it also raises the stakes for responsible governance.

With agentic AI, the risks aren’t just about biased predictions or opaque models—they extend to how agents interpret goals, how they choose actions, and whether their behavior aligns with human values. Ensuring responsible AI now means proactively designing, monitoring, and constraining these autonomous systems so that their actions remain transparent, accountable, and aligned with intended outcomes.

Frequently Asked Questions about responsible AI

Ready to learn more?

Responsible AI is a winner for everyone

Find out why Pega Ethical Bias Check earned an Anthem Award from the IADAS for preventing discrimination in AI outcomes.